File server monitoring is crucial for organizations everywhere. Not only allows it admins to ensure that the servers and files are available, but that they are also working with the best performance. This way, they know at all times that their data is stored safely and easily accessible.

Successful admins know that, in order to make monitoring work for them, they need to automate it to the maximum. This way, they free themselves up from time-consuming tasks, enabling them to dedicate their hours to work on tasks where they are actively needed, such as identifying solutions and optimizing operations.

Whether you want to start monitoring from zero or upgrade your file server monitoring strategies, this article will offer you valuable insights on how to make the most of a file server monitoring software, and ensure that your file servers are always working properly.

TL;DR:

File server monitoring is crucial for maintaining data availability, security, and overall performance within an organization’s IT environment.

- By keeping an eye on hardware, operating systems, and file server services, monitoring helps identify unusual activity in CPU usage, memory, storage, network, and file operations.

- With real-time tracking of file access and customizable alerts, administrators can swiftly address unauthorized changes, which is vital for preserving data integrity and meeting compliance standards.

- Additionally, forecasting and trend analysis aid in capacity planning, helping to avoid storage shortages and enhance performance.

What are file servers?

File servers are systems responsible for storing and managing data files. Their purpose is to offer file access rights to other terminals sharing the same network or using the Internet.

Users that are granted access rights by the administrator may use and share files directly via the server or via its file system access permission function, without needing to actually download and physically transfer documents. This encourages collaboration among team members and the ability to have constant access to data.

According to the protocols used, file servers may be set up in different ways. For example, some companies choose to continue using FTP protocols that, although quite old, are easy to manage.

To make FTP servers more secure and harder to break into, IT professionals often use File Transfer Protocol extensions, like FTPS (File Transfer Protocol Secure). This solution supports Transport Layer Security and Secure Sockets Layer (SSL). In closed networks with a Windows server, file servers with CIFS/SMB (Server Message Block) are often used and with Linux systems, the network file system (NFS) is usually preferred.

One of the most used protocols for file servers, especially since it allows connections and downloads via the Internet, is HTTP. It is as user-friendly as FTP, but offers additional security features.

Depending on the size of the business, file servers may either be virtual or dedicated on-premises servers. Some file servers can also support databases, securely managing and storing database information alongside file sharing. Smaller companies may also use on-premises workstations that satisfy the network’s storing needs. While it is possible to host several file servers on the same hardware server and also install other software servers under the same operating system, it is considered quite risky. In such a case, any form of failure affects multiple application servers that are hosted together, thus increasing the chances of disrupting the business’s operations.

On-premises file servers are easy to use and set up, but maintaining them may prove quite inflexible and difficult. Depending on the size of your company, the number of users and the volume of data, the effort required to maintain the folder and directory structure increases. To address these challenges, monitoring directories is important to safeguard sensitive data and detect suspicious activities. The number of user shares can also quickly become confusing.

Moreover, if multiple users want file access at the same time, the traffic might generate bottlenecks.

When using on-premises workstations, configuring audit settings on each computer and file share is essential for accurate data collection and security oversight.

Types of file servers

File servers come in several forms, each suited to different organizational needs and IT environments. The most common types include dedicated file servers, non-dedicated file servers, and virtual file servers.

Dedicated file servers are designed primarly to handle file storage and sharing, ensuring optimal performance and security for file monitoring and management tasks.

Non-dedicated file servers, on the other hand, perform multiple functions — such as acting as both a file server and a database server — making them a flexible option for smaller organizations or those with limited resources.

Virtual file servers are software-based solutions, typically running on virtual machines or containers, that provide file server functionality without requiring dedicated physical hardware. While the underlying data still resides on physical or network-attached storage systems, these virtual environments have become increasingly popular as they allow businesses to scale file storage and management capabilities quickly and efficiently.

Understanding the differences between physical and virtual file servers is essential for selecting the right monitoring tools and strategies, ensuring that your file monitoring system is tailored to your specific server setup and business requirements.

What is file server monitoring?

File server monitoring is the practice of collecting and analyzing data to ensure that file servers function per their optimal parameters and provide their intended functions. A monitoring tool is essential for tracking file and server activity, maintaining system integrity, and detecting unauthorized file changes. This includes aspects of the operating system, but also metrics on CPU, memory, network, and disk drive space and activity utilization to ensure security and performance, as well as network shares, log files, and event logs.

Who monitors file servers?

Any company that uses file servers must monitor them because, by doing so, IT professionals may see whether servers are configured correctly and are able to locate issues when they arise. This means that, no matter the size of your business, either an SMB or an enterprise, your IT department must ensure that they have the right file server monitoring strategy in place and that they use the right tools to stay updated on key metrics.

Why is it important to monitor file servers?

Monitoring file servers is important because they are extremely valuable assets for a company’s IT infrastructure, as all data is located in them. When working properly, file servers ensure that users have constant, fast access to files and folders, that they may store new files, and sync with remote access locations.

On the opposite spectrum, anything that negatively impacts the activity of file servers may generate operational disruptions that cause frustration among team members, partners and customers, affecting the way the company is perceived and its bottom line. Not being able to access documents and resources may lead to delivery delays and business.

To protect companies against potential crises generated by file server errors and issues, IT administrators need to monitor them, ensuring that hardware resources are sufficient and that their performance is in the optimal ranges. As part of a comprehensive security framework, it is also necessary to determine the level of access and permissions users have to files and resources.

In this context, IT administrators need to pay much attention to CPU, RAM, hard drives, and network access. These components should be continuously monitored to detect suspicious activities and maintain optimal performance. Any issues regarding these components create bottlenecks and affect the activity associated with file servers, thus generating disruptions in operations.

How do I monitor a file server?

Similarly to server monitoring, the best way to monitor file servers is through dedicated monitoring tools. These tools are specialized software solutions used for monitoring and managing files or network systems. They bring significant value to the activity of IT administrators, because they offer an in-depth view of the status of all file servers, while also detecting threats significantly faster than any form of human supervision.

When it comes to file server monitoring, it is important to focus on 3 key layers: the hardware, the operating system and the specific services and applications you need to check on a file server.

File activity monitoring and the ability to track files are crucial for detecting unauthorized file changes and maintaining data integrity. It may be worth considering adding a strategy for file activity monitoring, especially for security reasons, in order to identify accesses and quickly report to the right team in case unauthorized ones are spotted. Also the last modification date is a parameter that may be well worth checking in your monitoring efforts.

File server hardware monitoring

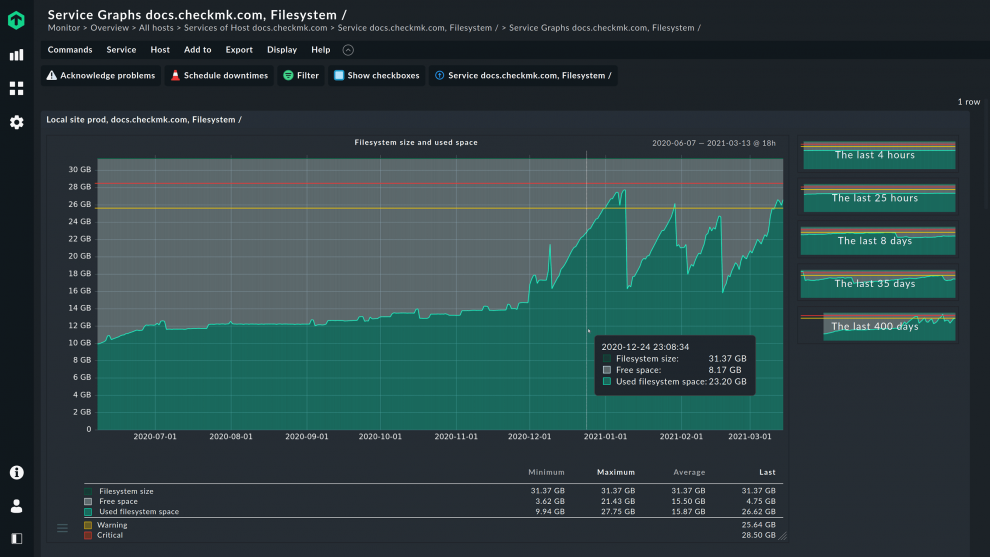

When monitoring file servers, it is crucial to keep an eye on storage utilization and hardware performance. The first helps you ensure that there is always enough storage available for the files. The second refers to CPU, RAM, hard drives, and network access. Problems in these areas create bottlenecks and prevent file servers from performing optimally.

Still, focusing on the actual values is not enough. Experienced IT administrators know that they need to keep an eye on metric trends and compare them to identify anomalies early on. For example, if the throughput of a hard disk unexpectedly increases significantly, this may indicate a problem. Moreover, checking the network bandwidth of the server is also important to identify bottlenecks, and ensure that everyone has a positive experience accessing the files on the server. File monitoring systems can also track file count and file age to provide insights into file characteristics and lifecycle.

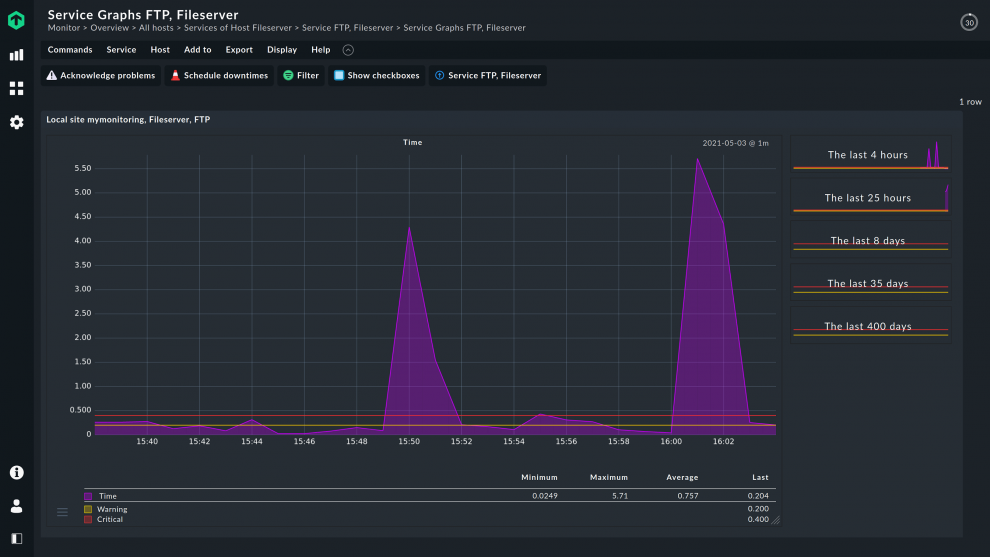

A solution like Checkmk enables you to record performance peaks and be automatically informed about anomalies. For example, if you have unusually high data movements, Checkmk can trigger an alarm if previously requested.

You can also investigate irregularities in detail thanks to the extensive graphing functions in Checkmk and benefit from the automatically generated reports which enable you to investigate issues and errors.

For file servers, capacity management is also crucial, since forecasting the development of the disk space can help IT administrators avoid unpleasant surprises and ensure there are no operational disruptions.

By default, Checkmk uses historical data to create a forecast for the future. Discover more by viewing the forecasting chapter of Checkmk’s manual.

File server OS monitoring

On the operating system level, file server monitoring should include:

- System resources: CPU, memory, disk I/O

- File systems & storage: free space, mount availability

- Network & protocols: interfaces, SMB/NFS services

- System services & processes: critical daemons, authentication services

- Updates & patches: OS and service update status

- Security & compliance: audit logs, access events, security incidents.

Moreover, since different operating systems are used in practice, each comes with its particularities.

For Linux systems, for example, cron jobs are extremely important, as they are responsible for creating backups. During your constant activity of running data backups, a crucial step is to check whether they have been successfully created.

File server services and app monitoring

Monitoring file server services and apps is essential, as they are also installed on the operating system and access hardware components. Excellent monitoring means understanding what resources they consume.

For example, calculating the RAM needed by a certain file server service or app helps IT administrators assess their IT ecosystem, offering them the necessary information for scheduling, decision-making and risk reduction.

Ensuring that the used protocols (such as FTP) are working is also highly relevant.

File access monitoring

File access monitoring is a vital aspect of file server management, providing visibility into who is accessing, modifying, or deleting important files and folders stored on your server.

By tracking file access events — such as reads, writes, and deletions — administrators can ensure that only authorized users interact with sensitive data. This level of monitoring is crucial for maintaining security, as it allows you to quickly identify suspicious activity or unauthorized access attempts.

Modern file monitoring systems and software offer robust tools for tracking file access, often integrating audit logs that record every access event in detail. These audit logs are invaluable for investigating incidents, validating user actions, and ensuring that your data remains protected.

By implementing comprehensive file access monitoring, organizations can proactively audit their files and folders, respond swiftly to potential threats, and maintain full control over the data stored on their file servers.

Real-time monitoring and custom alerts

Real-time monitoring is a cornerstone of effective file server management, enabling administrators to detect modifications to files and folders as they happen. With real-time file monitoring, any changes — such as adjustments to file size, modified date, or last modified date — are immediately identified, allowing for rapid response to potential security incidents.

Custom alerts can be configured to notify administrators whenever predefined thresholds are crossed, such as unauthorized access to important files or unexpected modifications to critical data. These alerts can be tailored to monitor specific files, folders, or types of file activity, ensuring that you are always informed about changes that could impact the security or integrity of your data.

By leveraging real-time monitoring and custom alerts, organizations can set up a proactive defense against data breaches, quickly identify and address issues, and maintain the highest level of security for their files and folders.

Auditing and compliance in file server monitoring

Auditing and compliance are essential elements of any robust monitoring strategy. By maintaining detailed audit logs of all file access events — including reads, writes, and deletions — administrators can track and verify every action taken on files and folders. These audit logs not only help organizations monitor file activity and identify insider threats, but also serve as critical evidence for demonstrating compliance with industry regulations such as GDPR, HIPAA, and others.

Effective auditing enables organizations to track changes, monitor user access, and ensure that all file and folder activity aligns with internal policies and external legal requirements. Regular auditing and monitoring of file servers help to quickly identify unauthorized actions, prevent data breaches, and support a culture of accountability and transparency within the IT environment.

Scalability, performance, and cloud storage integration

As organizations grow and their data needs evolve, file server monitoring solutions must be scalable and capable of maintaining high performance, even as the volume of files and file activity increases. Modern monitoring tools are designed to handle large-scale environments, supporting both on-premises and virtual file servers without compromising speed or reliability.

Cloud storage integration is another key feature, allowing administrators to monitor files and folders stored in cloud platforms like Google Drive alongside those on local servers. This unified approach to file monitoring ensures that all important files — regardless of where they are stored — are tracked, managed, and protected under a single monitoring system.

With support for virtual environments and the ability to set custom alerts, these tools provide comprehensive visibility and control, enabling organizations to manage their data securely and efficiently across all storage environments.

By leveraging scalable, cloud-integrated file server monitoring solutions, businesses can ensure the security, integrity, and availability of their data at all times.

Getting started with file server monitoring

Since different IT environments and file servers require different approaches, it is best to choose a solution that adapts to your infrastructure, ensuring configuration is intuitive and fast. It is also important to verify which operating systems and configurations are supported by the monitoring solution.

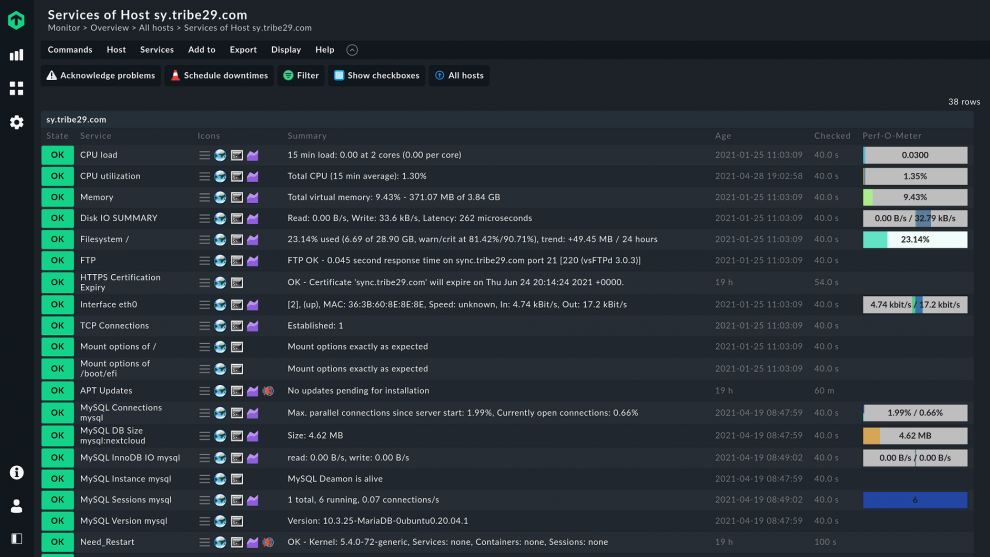

No matter what file servers you are using – Windows or Linux – thanks to Checkmk's rule-based approach, installation is easy even if you have to monitor a lot of servers. With just a few clicks, you can include numerous file servers into the monitoring and monitor them very precisely.

Next, you have to identify the KPIs that are relevant for you. Some metrics that you need to take into account are CPU utilization, memory load, disk utilization, average response time, error rates, disk uptime, IP requests etc. Checkmk automatically detects relevant metrics on your servers and suggests reasonable thresholds for alerts.

Moreover, the thresholds provided for file server monitoring by Checkmk can be customized to your needs. The admin can choose between various mechanisms for the alerting, and guarantee that the communication flows are effective and generate results. For example, a telephone loop can be initiated if a hard disk of a file server fails or the system is down. The responsible person on-call gets notified immediately. This ensures that the IT team can react quickly to the incident.

Click here, for a step-by-step tutorial on how to use Checkmk to set up comprehensive monitoring of your file servers.

Choose the right file server monitoring software

Choosing the right file server monitoring software is crucial for delivering success and keeping your file servers and IT environment protected. This is why there are several things you need to take into account before making your choice.

The solution must be able to cover your infrastructure and support all commonly used operating systems and hardware, working for both virtual environments and on-premises servers.

Checkmk offers you solutions for different operating systems, ensuring trustworthy Windows file server monitoring, as well as Linux.

Suitable monitoring integrations are available for Checkmk for all common server hardware manufacturers such as Dell, IBM, HPE, Cisco, or Huawei. Checkmk also includes file monitoring plug-ins for management boards of enterprise server solutions, such as HPE-iLO boards. So, with just a few clicks, you can have all the necessary hardware information of your servers in a single monitoring view.

Checkmk additionally comes with active checks for all common file server protocols such as FTP, SFTP, CIFS/SMB, or HTTP, which you can easily set up via Checkmk's graphical user interface. This way, Checkmk actively verifies whether the file server's transfer protocol is working. These active checks are then available as services of the host and thus complement your file monitoring. Monitoring systems can also help detect unauthorized changes, indicating when it might be necessary to restore files to their original state.

Checkmk also includes forecasting, which you can use to predict your capacity usage, for example. Our solution makes predictions based on historical data, taking temporary development into account.

A good file server monitoring software requires an intelligent alarm management that enables you to set thresholds and notification methods in case of abnormal activities.

Checkmk uses native agents and monitoring protocols to collect data. It also provides default thresholds for alarms, which you can of course customize according to your needs.

Final thoughts

Automated solutions are a must for file server monitoring. Identifying the best one for your IT environment is extremely important, since it ensures that this part of your job is successfully done.

Checkmk is the intuitive, easy-to-use monitoring software that guarantees you know everything that happens with your files servers. The constant, complete visibility into your file servers enables you to make the best decisions, and to secure your IT environment.

FAQ

What is file and folder monitoring?

File and folder monitoring enables you to see, in real-time, when a user accessed and used sensitive files and folders on Windows servers, virtual servers, or in your cloud storage. It also showcases access events, such as file ownership changes, file reading, writing, deleting, renaming, effective permissions, modifications, or attribute changes. Importantly, file and folder monitoring detects and audits when files are deleted, helping to identify unauthorized file removal or mass delete events. Actions such as delete and deleted files are tracked to ensure security, compliance, and activity auditing.

File and folder monitoring may be used for files, folders, and subfolders, as well as selections of files, folders, and subfolders.

How do I set alerts on file server performance?

To set advanced alerts on file server performance, you can use Checkmk. The monitoring solution records performance peaks and automatically informs administrators about anomalies, such as unusual high data movements.

By monitoring performance, IT administrators have visibility over the CPU utilization, memory consumption, disk I/O and network performance of their file servers. They can also keep track of the input/output operations per second (IOPS) of a file server. In this way, they can identify bottlenecks and eliminate them immediately.