What is network monitoring?

Network monitoring is a complex and vital topic for any company. Knowing its benefits and sub-areas is crucial.

Network monitoring is a multifaceted process that involves tracking activities within a network to identify issues, address problems, optimize performance, and maintain overall efficiency. It encompasses overseeing computer networks, including devices, routers, servers, and connections, to ensure reliability and optimal performance. This is done using network monitoring software that collects data, analyzes it, and presents it to network administrators so that they can take action—providing real-time alerts where necessary for prompt response to network events.

What was once a simple task for the limited extensions of networks of old has become staggeringly complex as networks have grown ever larger and more varied. Different network monitoring protocols have been created along with the tools to effectively monitor a network in order to help administrators wade through the numerous hosts and devices in need of monitoring. Network monitoring metrics collected through these protocols and network monitoring services that make use of them are at the core of what is network monitoring.

Network monitoring does not only include monitoring the health and performance of a network. It also includes subtopics like network performance monitoring, network security monitoring and plenty of others. All or most of these are supported by a network monitoring system, that grants the administrator an holistic view of the infrastructure.

Furthermore, it is important to note that different types of networks, such as LAN, PAN, WLAN, and virtual networks, may require customized monitoring strategies and tools. The same applies to different infrastructure configurations, such as hybrid and cloud networks.

TL;DR:

Network monitoring ensures a network’s health, performance, and security, keeping operations smooth and minimizing disruptions.

- All devices, connections, and services are observed in real time, allowing administrators to respond proactively to issues.

- Performance, traffic, and security monitoring reveal bottlenecks, unusual activity, and compliance risks before they escalate.

- Holistic coverage across physical and virtual infrastructure provides a complete view of the network.

- Rules-based setups and network topology maps make managing large and complex networks more efficient.

Who is network monitoring for?

Simply put, monitoring is like taking the pulse of an infrastructure. Anyone responsible for preventing issues, troubleshooting, or acting on malfunctions has a clear interest in network monitoring. This includes all who are in charge of operating the various hosts and devices that make a network infrastructure, not just those who set them up. Be it a real or virtual one, anybody who is tasked with maintaining or operating a network-connected device is within the spectrum of those who should consider monitoring it.

In modern enterprises, IT teams rely on network monitoring to manage complex, internet-dependent infrastructures and ensure business continuity. In larger organizations, this function is often handled by a dedicated department specializing in network oversight.

Often the security team also does network monitoring, since ensuring that a network operates securely is also done through constantly monitoring it. Network security monitoring is an important branch of monitoring, as a network has to be both performing well and be secure from outside attackers to be considered fully “healthy”.

Why is network monitoring important?

A high performance network is at the core of all companies’ IT. It consists of ensuring that the network infrastructure is operating to the best of its capabilities, without bottlenecks, hiccups, or errors. It is of vital importance for the whole company. To offer its products or services, and for its employees to work effectively, an efficient network is crucial. Regardless of the company’s industry, maintaining business operations necessitates a fully functioning network. A slow or unreliable network causes disruptions and loss of millions in image and disgruntled customers.

Network monitoring is there to prevent all this. Effective network monitoring plays a crucial role in reducing downtime by enabling early detection and rapid response to network failures and network performance issues. Or, at the very least, to quickly act when network issues arise. Without any monitoring system at work that can do network performance monitoring and in general monitor the network, it is impossible to identify potential problems in advance. Administrators are left to react after problems have occurred, lacking any way to identify them in time and to effectively know their causes. Even worse, without accurate monitoring it becomes guesswork as to where and why the issue occurred, with no guarantee of prevention. Minimizing disruptions through proactive monitoring can lead to significant cost savings for organizations.

Benefits of network monitoring

There are many key advantages to network monitoring, some of which are easy to imagine.

1. Enhanced network real-time visibility

A network monitoring service gives administrators a comprehensive, real-time view of the entire network. Knowing where components are, how they are functioning, and when they fail is an obvious and critical benefit.

2. Infrastructure topology and performance mapping

Monitoring all hosts enables the creation of a complete topology of your infrastructure, mapping all the hardware and software across the network. By tracking the performance of each component, administrators can identify:

- Where more powerful hardware is needed.

- Where systems are not scaling properly.

- Where traffic and network congestion occurs.

This allows teams to know when the infrastructure needs updates or upgrades—without manually checking every piece.

Finally, early detection of issues through real-time monitoring helps prevent problems and maintain optimal network performance.

3. Improved security and compliance

A full view of hardware and software makes it easy to detect changes as they happen. Real-time monitoring tools highlight these changes instantly, enabling quick action against compliance or security violations.

While network monitoring tools differ from network security or intrusion detection systems by focusing on internal network issues, they still help identify and mitigate compliance and security risks before these can affect the organization. Network monitoring is therefore vital not only for operations but also for legal and security considerations.

4. Ensuring SLA compliance

Monitoring delivers performance insights, helping organizations meet and maintain service level agreements (SLAs).

Network monitoring vs. network management explained

Network monitoring is one of the processes that makes up the larger topic of network management.

Think of network monitoring as keeping a constant eye on your network’s health—providing ongoing insights into network health that allow for proactive management and optimization by tracking uptime, performance, and potential issues in real time. Network management, on the other hand, goes a step further: it’s about proactively configuring, optimizing, and planning the entire network ecosystem to ensure smooth, long-term operations.

While network monitoring provides visibility into the current state of the network, network management is the process of taking proactive action based on monitoring data, such as capacity planning and policy adjustments, to prevent problems before they occur. As noted in a study published by MDPI (Symmetry journal), network monitoring is considered a foundational component within the broader scope of network management, which encompasses both strategic and operational tasks.

How to monitor networks?

Monitoring networks is done with the use of various network protocols and a suite of monitoring tools that commonly include at least a couple consisting of a service and a manager. More often, multiple services and managers. The service is also known as an agent, which may be pre-installed on network devices or has to be installed and configured manually. The manager is the software that collects data from across the network and analyzes it, creating reports, dashboards, and handling alerts. This manager is the central monitoring software, usually installed on a network monitoring server. Agents are often present but not anytime, as monitoring through vendor APIs is a recent development of network monitoring that does without the need of an agent.

The job of monitoring the network comprises collecting metrics and sending them to the manager, which takes care of configuring the whole network monitoring system. The data to be analyzed, how to present them, under what conditions to trigger an alert, set up actions to automatically act on events and more is done at configuring time by the network administrators. How to do it all depends on the network monitoring tool of choice.

Not only is monitoring the hosts and relative services possible through a monitoring solution but managing them is usually possible as well. This includes, among other possibilities, configuration capabilities that allow the administrator to remotely set up and modify the configuration of network devices. Managing your network is therefore often done through the use of a monitoring manager.

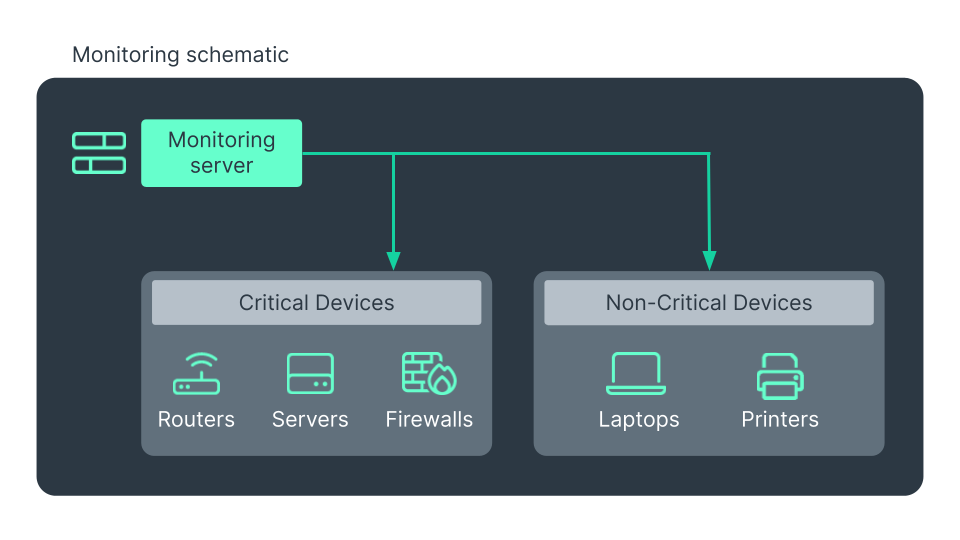

What network components need to be monitored?

It is easy to think for too long about what needs to be monitored when setting up a solution. Given that every device has to be set up, usually, for monitoring, and that the more monitored devices, the more collected data, it is understandable to try to skip some devices. Every new set of data is a burden in terms of both computing and human time, and some administrators may find it reasonable to try to minimize the monitoring to only what matters to them most. However this means excluding important pieces of your infrastructure, that can be both the cause and the explanation of future issues.

Therefore the answer to what network components need to be monitored is: all of them. You should always attempt to monitor your entire IT infrastructure – and preferably with an all-in-one approach. Only by taking a holistic approach to network monitoring is it possible to have a complete, and consequently more accurate, view of what is happening on a network. Ignoring part of the infrastructure creates the risk of having tiny, apparently innocuous, blind spots in your monitoring. Yet, as all it is interconnected, these ignored spots will sooner or later be the cause of problems and disruptions, which could have been avoided if they were monitored from the beginning.

Considering specifically what components to monitor, network monitoring is generally about checking devices like routers, switches, access points, firewalls, sensors, UPSs, network printers. These are only the bare minimum though. All hosts, regardless of whether they are running Windows, Linux, Unix or anything else, will have to be included in network monitoring, along with web servers, virtual and cloud servers, and virtual machines.

When monitoring needs span diverse scenarios — from data centers and extensive on-premises infrastructures to cloud resources — it is crucial to ensure comprehensive coverage across all types of deployments. A modern network monitoring service is inherently capable of overseeing both physical and virtual devices. Managed service providers often rely on comprehensive monitoring solutions to efficiently manage multiple client networks and their components within a unified platform.

What metrics need to be monitored?

To effectively monitor a network and ensure its smooth operation, it’s important to focus on a variety of metrics that together provide a clear and complete picture of network health and performance. Below, these different types of metrics are outlined to help guide what to monitor and why.

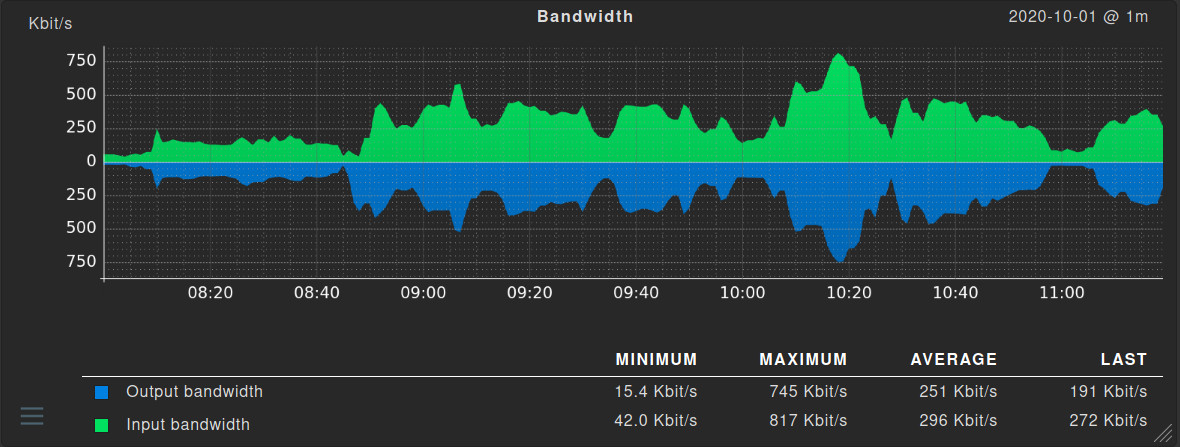

Core network metrics

The most obvious parameters to monitor are those that directly indicate a not so healthy network: packet drop rates, error rates, and bandwidth. Along with these, the state of the ports of your network devices through appropriate port monitoring. These metrics give you the basics of if and how the network itself is working.

Hardware-specific metrics

In coordination with the above, knowing hardware-specific metrics like CPU temperature and usage, occupied and free RAM, fan speeds, and power supply’s voltages are vital for knowing the overall health of network devices. Along with the traffic metrics cited before, these give a mostly complete idea of what is or could cause problems.

Service-related metrics

Next, more service-related metrics are useful to collect. For firewalls, their general status, the number and type of open ports, and the active connections. In VPN monitoring, be aware of the status of the tunnels and the level of availability as a minimum. For software in general, their version, their status (running, installed, stopped etc.), and eventual error messages are the first metrics to take into consideration.

Wireless network (WLAN) metrics

Modern infrastructures have both wired and wireless network connections. WLAN monitoring means monitoring the status of all access points, their signal strength, the noise levels, and full list of connected devices. Since a functioning WLAN is highly dependent on external influences, it is essential for a company to include the infrastructure components for the wireless environment in the monitoring.

What is network performance monitoring?

Network performance monitoring is preoccupied with the actual performances of the network, on how efficiently it operates, and in identifying bottlenecks. It aids network administrators and analysts in gathering network data, allowing them to measure performance variables and identify potential issues or risks. A network monitoring tool analyzes the performance metrics, identifying where bottlenecks and congestions are, and helps to increase the throughput of the network once these are fixed. Performance monitoring enables early detection and resolution of routing issues and network failures: by tracking performance fluctuations, it helps identify trends and problems that may affect network reliability, helping to maintain network health and prevent downtime.

Network performance monitoring is not only focused on fixing problems, but also on ensuring that the flow of information in your infrastructure is moving the speediest possible, with no interruptions. Otherwise, performance issues can cause anything from minor inconveniences, like intermittent disconnections, up to a network slowing down to a crawl, effectively making it unusable. By implementing performance monitoring in your larger network monitoring efforts it is possible to know where the network is struggling and why, and take action.

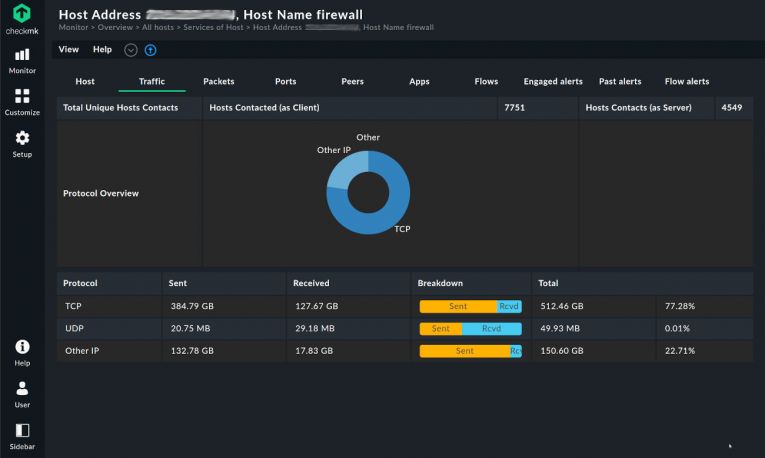

What is network traffic monitoring?

Network traffic is the sum of data moving across a network on a given moment. All packets going through a network constitute the overall traffic.

Monitoring network traffic is a key element of both network security and performance monitoring. Performance because knowing the origin and type of the packets will better inform you about the network’s requirements and shortcomings. Security because it is an important indication of possible malware, intrusions, and unauthorized usage of the network.

Network traffic monitoring is often, but not necessarily, network real-time monitoring as well. With a constant visualization of the flow of traffic it is easier to see where traffic originates and where it goes, which is basically what network traffic monitoring is all about. While simple packet capturing is sufficient to understand how traffic behaves on a network, network flow monitoring is specifically designed to inform you of this. Specifically designed protocols like NetFlow have been developed to provide a clear and immediate view of network traffic trends. By extracting samples from the overall traffic, these allow you to monitor network traffic more efficiently compared to a general capture of every packet going through all your hosts, a rather expensive effort.

What is network security monitoring?

The last but certainly not least subset of network monitoring is network security monitoring. With the increased reliance on networks of companies in every industry, the quality and quantity of data that is exchanged through them has also increased. Sensitive data are present on any network and need to be protected. Ensuring their safety from unauthorized eyes is of primary importance.

Network security monitoring is about monitoring components and metrics that may indicate less than optimal security. Connected devices, tentative access through a not authorized device, frequent connection attempts on a closed port, unusual traffic coming from an otherwise quiet host and many other metrics like these may suggest something suspicious is happening in your network. Most network monitoring solutions, Checkmk included, integrate these checks in order to guarantee that security is kept at maximum levels. With an adequate alert system, security threats—such as intrusions and possible holes in your network—are quickly caught and notified.

Getting started with network monitoring

With all the benefits and importance of network monitoring, it is clear that the question should not be why monitoring but how. There are countless network monitoring services, with plenty of different features and focuses. To choose one, it is important to be aware of what they can monitor, what network monitoring protocols are supported, and how they monitor the network. Technical differences, like an agentless or agent-based monitoring solution or using vendor APIs to collect metrics, are to be considered as well before deciding on a particular network monitoring software solution.

These are the fundamental, technical, deciding aspects for getting started with network monitoring. We will discuss them one by one in the following sections.

How to find the best network monitoring tool

While the “perfect” network monitoring tool doesn't exist, there are a few essential features a solution must include to be considered among the best. Typically, the core requirements fall into five key parameters:

1. SNMP support

Monitoring the health and performance of your network, means having at least SNMP (Simple Network Management Protocol) support in your monitoring service. This is a fundamental protocol in monitoring that is specifically designed to check the overall health of network devices, and can also manage them.

2. Network traffic flow monitoring

Support for protocols like NetFlow, IPFIX, and sFlow is essential. These protocols track where traffic is going and how it flows across the network, providing insights that aid in upgrade planning, performance monitoring, and disruption prevention.

3. Real-time issue detection and alerts

The tool should actively monitor the network and generate real-time alerts when issues arise. This is one of the most valued features, as it provides peace of mind and enables immediate response when problems occur.

4. Clear and accessible data visualization

A good tool should provide visual dashboards, network topology maps, and an intuitive interface. Fast access to actionable data enables IT teams to diagnose and resolve issues more quickly.

5. IP monitoring and network scanning

Built-in IP scanning and discovery helps automate detection of both new and existing infrastructure. This ensures the network topology remains accurate and up to date with minimal manual input.

What network monitoring protocols do exist?

The exchange of metrics for network monitoring is done through the use of a network monitoring protocol. There are many of them, not all of which are supported by all network monitoring systems, and some are also not in use anymore.

The major one is still SNMP (Simple Network Management Protocol). It includes not only monitoring capabilities but also the managing of the supported devices. It is a widespread protocol that is already pre-installed on many network routers, switches and more. SNMP monitoring is a vast topic, with its dedicated page.

After SNMP, in order of usage if not importance, are the family of flow-based protocols: NetFlow, sFlow, IPFIX. These are designed to analyze the network traffic “flows” and are thus especially useful in network performance monitoring and security monitoring. When referencing to Network flow monitoring usually one of these protocols has been implemented.

All these protocols are OS-agnostic. They work equally well in Linux network monitoring and Windows network monitoring. A specific protocol for Windows network monitoring protocol is WMI, with capabilities that mimic those of SNMP in addition to adding a few more features. Under Linux, log analysis through an implementation of the Syslog protocol or accessing each device with SSH are very rudimentary methods of monitoring.

For network traffic monitoring, RMON is often implemented as a traffic sniffer and analyzer. Being an extension of SNMP, RMON is found wherever SNMP is too, and comes pre-installed on many network devices.

Agentless monitoring with SNMP

The Simple Network Management Protocol (SNMP) does make use of agents in its monitoring. It may therefore seem counterintuitive to talk of agentless monitoring with SNMP. The reason is one of semantics. While usually a SNMP agent is pre-installed on many network devices nowadays, it is often configurationless, without required steps to start monitoring with it (other than perhaps activating it). The SNMP manager can poll it effortlessly, without the network administrator having to do anything.

In this specific situation, SNMP monitoring is considered agentless as the agent is transparent to the human by not requiring any tinkering, or pre-configuration. It makes SNMP act like the agent does not exist at all, as with a proper agentless monitoring solution. Thus, while technically being an agent-based monitoring protocol, SNMP may also be considered agentless.

Monitoring via vendor APIs

Nowadays, some vendors do not use any of the classic network monitoring protocols and implement custom APIs in their devices themselves. These are highly-specific but highly-customizable ways for monitoring a network. Generally, they are RESTful APIs that can be interacted with a simple GET or PUT HTTP request. Lightweight and programmable, these vendor APIs have a steeper initial learning curve by being non-standardized, but with the advantage of being easily automated.

By utilizing an API, network engineers can create repeatable code blocks that can be interconnected and which react, automatically, to monitoring events. This is essentially how most software functions, but network engineers use it to make physical and virtual changes to network infrastructure instead. Vendor APIs can expose all sorts of metrics, matching in quantity what usual network monitoring protocols like SNMP can.

A single API to monitor everything does not exist. Recently efforts to standardize have been made. OpenConfig being one but not the only one. Currently most of these APIs are still wildly different from each other and require a learning phase before becoming of use. Being highly specific to a single vendor limits their usage for network monitoring, but themselves these APIs show a great deal of potential.

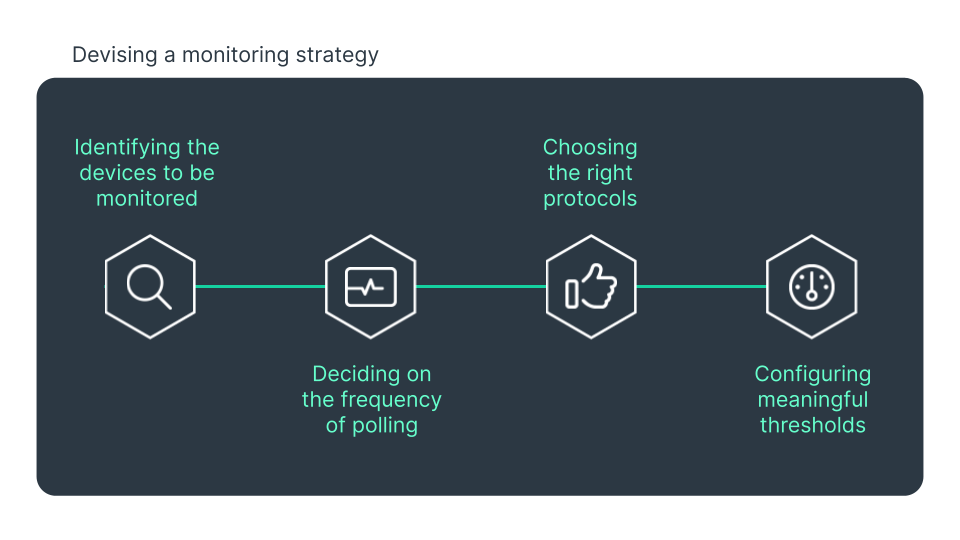

Best Practices for network monitoring

How to monitor a network depends on the specifics of a network and hardly is identical on two different networks. Still, a few best practices for effective network monitoring apply to any situation and company.

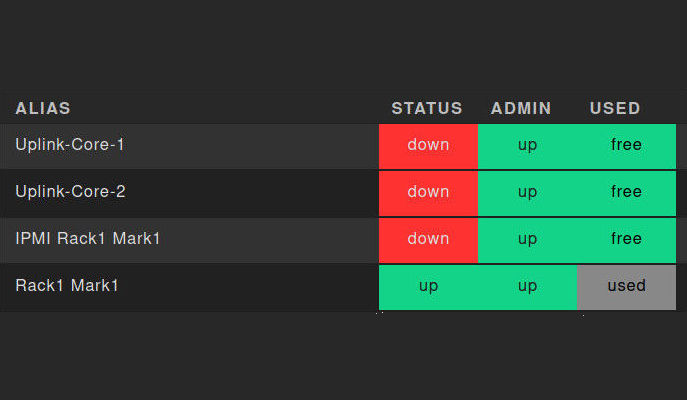

Why you should monitor all network ports

Port monitoring consists of checking the status and health of the physical ports on network devices. This means monitoring your router and switch ports, but is not limited to these. Port monitoring adds a further layer of protection against disruptions and intrusions that generic network monitoring cannot offer.

This is because by monitoring your hardware ports it is possible to discover faulty ones, and replace or disconnect them before they cause an interruption on your network traffic. Duplex mismatches and configuration problems can be identified through a port showing a high rate of packet loss and errors. Without port monitoring this would have eluded the attention of any network administrator.

Problems related to cables, like a failing or a poorly connected one, can be detected through port monitoring. Corrupted packets and connection being intermittently up and down can be caused by the cables, not necessarily the port itself. Yet through port monitoring these problems can be inferred.

Security-wise, a rogue user connected to one of your devices can be discovered through accurate port monitoring. Knowing the status of each of your network ports means identifying those that are in use, those that are not, and those that should not be. Security audit may require a port scan, which a network monitoring system that includes port monitoring can comply with. Thus port monitoring is also a component of network security monitoring.

How can IP monitoring help

IP monitoring scans IP addresses to identify and include devices to monitor. This means that after installation, the monitoring tool automatically starts a scan of the entire network, or of a specific IP range or subnet, and then automatically integrates the devices found into the monitoring process. In the ideal case, during the scanning process the monitoring software recognizes the type of device and manufacturer and, based on this knowledge, automatically includes the relevant metrics into the monitoring.

But that is not all. IP monitoring, once the first scan is done, helps in monitoring changes relative to IP addresses in your network. Registered domains that point, or not, to the correct address, can be caught by monitoring software that supports IP monitoring. Any change that regards IP addresses is within the sphere of IP monitoring.

As a consequence of proper IP monitoring, monitoring software can take care of setting meaningful threshold values as standard. Exceeding or falling below these values will trigger an alarm or notification. Rules can be applied to edge cases or specific, perhaps temporary, necessities of a particular host.

IP monitoring helps in many cases but especially in two. For inexperienced administrators, it provides a layer of automation that can greatly ease the burden of monitoring and the worries related to it. By leaving the identification step to the network monitoring system through accurate IP scanning, errors and shadow IT are more easily avoided. When monitoring large networks, with hundreds or thousands of hosts, IP monitoring can set up the monitoring quickly and efficiently, allowing administrators to start monitoring the infrastructure in just a short time.

How to monitor large networks

Large networks have an order of magnitude of complexity that makes them a separate category in the world of network monitoring. Network device monitoring may take a long time on a thousands hosts large network, and an excess of alerts is definitely a common problem. While it may be tempting to skip parts of the infrastructure, foregoing a holistic view of it, it is a poor choice. Ignoring some parts may be convenient initially but it leads to all sorts of future risks that a company is in no position to accept.

For monitoring large and complex networks with different locations and differently connected components, a monitoring software that works with a rule-based configuration is quite suitable. With a few simple steps, administrators can use rules to define a policy, such as monitoring only the error rate of all access ports, for monitoring a large number of similar devices. Using rules it is possible to apply the same setting to a large number of hosts, which immensely reduces the workload in configuring your monitoring setup. Alerts can be more focused, and useful, using rules: for instance, a terminal that is regularly switched off will be set to not trigger an alert, as it is not an exceptional case but routine behavior.

A policy can be applied to a large network with a handful of well-crafted rules. A rule-based monitoring solution then handles the monitored systems based on this policy. Changing the policy can be done anytime, with a few steps, and applied to a large number of devices all at once. Exceptions are also possible at any time and are documented via rules. Automation via rules makes it easier and less error-prone including new hosts in the monitoring.

Rules-based monitoring isn’t strictly necessary when dealing with a moderate amount of hosts. Small companies may operate without such a solution by using a standard network monitoring service instead. But for large infrastructures, rules can be the best solution to monitor the network.

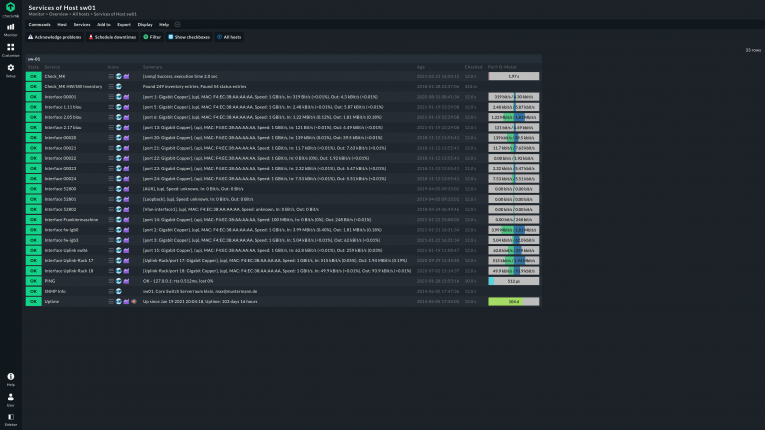

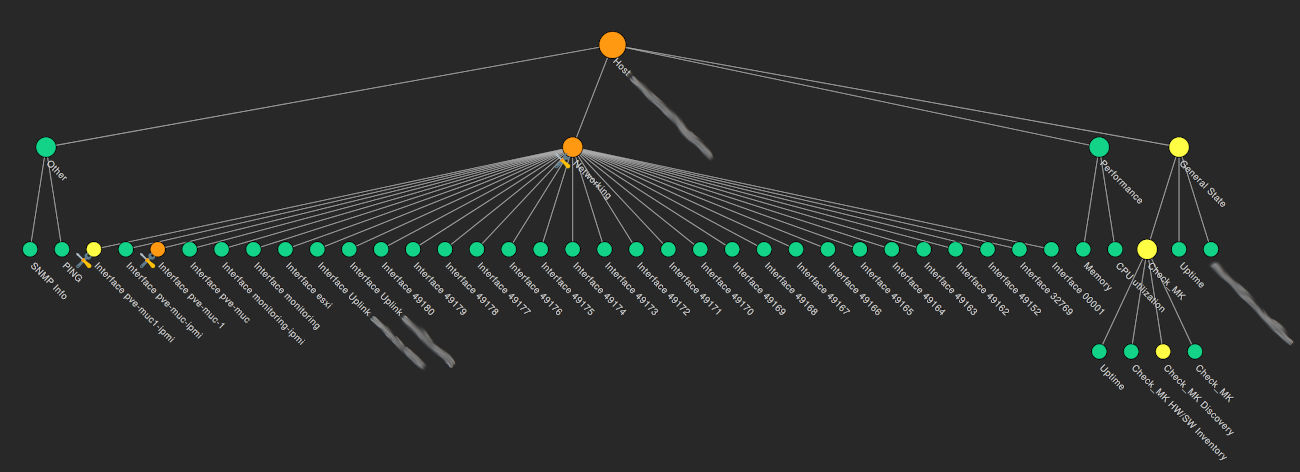

Network topology for a holistic view

A monitoring software that is able to detect all components in a network or in a specific IP range, and retrieve the data required for the monitored metrics, enables the administrator to obtain a holistic view of even complex network infrastructures. Via IP monitoring a complete view of the network can be presented in an easy to read dashboard, facilitating the identification of errors.

Often the network topology is given as an overview map, showing how the network is connected, virtually and physically. Network managers can navigate this network map, by clicking on the specific host that needs immediate attention. Other network monitoring tools display the network in a tree structure or as a table. The table display has the advantage that several pieces of information in a condensed form can be viewed at a glance.

It is not only the network topology that can be visualized in a holistic view. Some network monitoring tools support viewing performance parameters graphically as well. The current bandwidth utilization, the status of individual ports, abnormal error rates can all be shown in a graphical map, which enables the network administrator to quickly identify patterns, such as expected or unexpected performance peaks.

All this is possible by implementing your network monitoring solution holistically, considering all the hardware and software, without exceptions.

Network documentation to combat shadow IT

Shadow IT is a term referring to the use of IT-related hardware or software by a department or individual without the knowledge of the IT or security group within the organization. It can encompass cloud services, software, and hardware. The problem with shadow IT is that these hardware and software components are often consumer products. These usually do not have the necessary security features, or are not provided with the required security patches by their producers, so that such hardware or software can quickly prove to be a gateway for cyberattacks into the corporate network.

One of the common cases of shadow IT is the use of a cloud service without the knowledge of the corporate IT department. Sensitive data can end up on an unmonitored cloud, perhaps unintentionally, thus violating compliance rules.

A monitoring tool that implements an holistic view of the network can combat shadow IT. By scanning the infrastructure, network documentation is automatically created, which includes anything, permitted or not. The result is not only a topology of the network infrastructure, but also direct information about all hardware components and software solutions in the network.

Any good monitoring tool has an inventory function. This allows documenting the devices and software versions present on a network, and this data can be passed to a third-party solution, such as a license management system. This is useful in not only checking the compliance of every component on the network but also to alert the administrator of smaller changes, like updating a service to an unsupported version, which can pose a security risk.

Once the network documentation has been obtained, it is easy to discover connected hardware or installed software that should not be used. Without network monitoring this would have been a tedious and manual check of each host.

Conclusion

Monitoring a network includes many aspects, with lots of possible shortcomings but many benefits to reap once all is set up. There is certainly no tool to fit all, and compromises are to be accepted. However a complete network monitoring solution can’t easily do without IP monitoring, real-time monitoring, flow, and SNMP monitoring, all packed and presented in a modern user interface. These are all features that make the life of network administrators easier and hard to renounce.

Making an inventory of your network, a complete topology, and including security checks through port monitoring and relative alerts, are far from secondary features. In the end monitoring is not only keeping an eye on the infrastructure but borders with managing it. To do both is fundamental to having the most complete view of your infrastructure that can be achieved in a relatively uncomplicated way. Most network monitoring tools go in this direction, easing the work of network administrators and empowering them to have the highest control over what is happening on their networks.

Checkmk includes all these capabilities, making it a complete and scalable solution for your network monitoring needs. Whether you are ok with the simplicity of Checkmk Community or prefer the enhanced support of Checkmk Pro is totally up to you to decide. Both versions offer a great deal of features to make network monitoring easy, effective, and accurate.

FAQ

What is open source network monitoring?

Open source network monitoring refers to monitoring tools that are open source. For instance, Checkmk is open source. This doesn’t always mean free though: most open source solutions come at a cost that is asked for in exchange for better support, quicker updates, and additional features. Choosing a free Open source network monitoring is perfectly viable, like with Checkmk Community, as long as it is acceptable to handle issues and configuration on your own.

What is Linux network monitoring?

Linux network monitoring refers to monitoring hosts running Linux in one of its many distributions. Linux itself comes with a series of simple commands for monitoring networks that are standard not only under Linux but also under many Unix-like operating systems. Htop, tcpdump, netstat are present in virtually all Linux systems and offer rudimental network monitoring capabilities. Evoluted monitoring tools support Linux monitoring with advanced features and a much friendlier user interface.

What is Windows network monitoring?

Windows network monitoring is monitoring Windows hosts. Monitoring tools unequivocally support monitoring Windows systems through the use of a few protocols, like SNMP or WMI. Performances, services, and the event log are taken into consideration when doing Windows monitoring, similarly to other operating systems.

What is network flow monitoring?

In monitoring a flow is defined as a group of metrics about traffic. These flows are grouped in a special packet that includes a number of different pieces of information, such as the IP address of the sender and receiver, the source and destination ports, Layer 3 protocol types, the classification of the service, and the router or switch interface. Network flow monitoring is done with a few specific network protocols like NetFlow and IPFIX, that specify how these packets should be created and transmitted.